First steps in Workflow Foundation

I have started to play with Windows Workflow Foundation (WF) a few days ago. So far it seems very interesting, shipping with handy pre-build activities, persistence and tracking services, prity designers for visual studio, the capability of hosting these designers anywhere else…web services interaction, etc. Promising.

I just want to take down some notes here so I don't forget what I've learned.

Some Material

- MS E-Learning course

- Hands on Lab

- WF Documentation (Programming model, samples, tools)

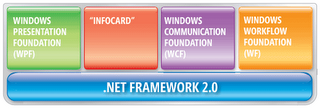

- Windows Workflow Foundation (WF): Set of components, tools, and a designer that developers can use to create and implement workflows in .NET Framework applications. It is part of the Microsoft .NET Framework version 3.0.

- Workflow: a set of activities that are stored as a model that describes a real-world process. A workflow is designed by laying out activities.

- Activity: A step in a workflow. The unit of execution, re-use and composition for a workflow.

- Types of workflows (Here is a post about how to decide which type to use):

- Sequential: Consists of activities that execute in a predefined order. Has a clear direction of flow from top to bottom, although it can include loops, conditional tests, and other flow-control structures.

- State-Machine: Consists of states and transitions that change a workflow instance from one state to another. Although there is an initial state and a final state, the states have no fixed order, and an instance can move through the workflow in one of many paths.

- Data-Driven: Is usually a sequential workflow that contains constrained activity groups and policies. In a data-driven or rules-based workflow, rules that check external data determine the path of a workflow instance. The constrained activities check rules to determine the activities that can occur.

The framework component model

The WF framework consists on 3 assemblies, containing the following namespaces:

- System.Workflow.Activities

- System.Workflow.Activities: Defines activities that can be added to workflows to create and run an executable representation of a work process.

- System.Workflow.Activities.Configuration: Provides classes that represent sections of the configuration file.

- System.Workflow.Activities.Rules: Contains a set of classes that define the conditions and actions that form a rule.

- System.Workflow.Activities.Rules.Design: Contains a set of classes that manage the Rule Set Editor and the Rule Condition Editor dialog boxes.

- System.Workflow.ComponentModel

- System.Workflow.ComponentModel: Provides the base classes, interfaces, and core modeling constructs that are used to create activities and workflows.

- System.Workflow.ComponentModel.Compiler: Provides infrastructure for validating and compiling activities and workflows.

- System.Workflow.ComponentModel.Design: Contains classes that developers can use to build custom design-time behavior for workflows and activities and user interfaces for configuring workflows and activities at design time. The design-time environment provides systems that enable developers to arrange workflows and activities and configure their properties. The classes and interfaces defined within this namespace can be used to build design-time behavior for activities and workflows, access design-time services, and implement customized design-time configuration interfaces. It includes the TypeBrowserEditor, a cool feature that can be reused.

- System.Workflow.ComponentModel.Serialization: Provides the infrastructure for managing the serialization of activities and workflows to and from extensible Application Markup Language (XAML) and CodeDOM.

- System.Workflow.Runtime

- System.Workflow.Runtime: Classes and interfaces that control the workflow runtime engine and the execution of a workflow instance.

- System.Workflow.Runtime.Configuration: Classes for configuring the workflow runtime engine.

- System.Workflow.Runtime.DebugEngine: Classes and interfaces for use in debugging workflow instances.

- System.Workflow.Runtime.Hosting: Classes that are related to services provided to the workflow runtime engine by the host application.

- System.Workflow.Runtime.Tracking: Classes and an interface related to tracking services.